We are bound!

Technology is overtaking our daily lives. Regardless of age, gender, ethnicity, career or economic status, you are engaged with a smartphone. These devices make us forget who we are and what we are capable of, wasting our valuable time to a certain extent which can be spent with a real human being, involving emotions. The phone, computer, tablet and other high tech devices have become not just objects, but for many, those are their best companions. In real life, if we feel lonely, anxious or sad, we might seek the attention and affection of another human being to console us. What if some of the lonely people didn’t deserve a grief taker? If we argue regarding the so-called concept, there are positive impacts as well as negative impacts.

During the past, humanity yearned for fewer needs. With the evolution, these requirements became more and more complicated since the technology made a revolution. The technology and social media go hand in hand, plays a significant role in everybody’s lives. We are so much influenced by the social media nowadays, and some of them are residing in the extreme, being addicts. From the morning to the day’s end, we spend plenty of hours on social media, yet feeling isolated. The narcissistic personality disorder is overcoming the society, majorly due to lack of empathy and seeking of admiration from the outsiders.

Social Media

Social media sites can make numerous casual relationships, which consumes more of our time and psychic energy, which seems to be more significant than the meaningful relationships we foster in the real world. People tend to post intimate details of their lives, providing access to their privacy. The pleasure we derive from sharing tidbits of our lives on social networks will lead to social media addiction. When someone wants to get attention from the public, they tend to post frequently on social media sites and evaluate themselves corresponding to the likes or the comments they receive. Their posts will boost the reality of their lives due to the exaggeration, and make them appear more desirable to associate. This might eventually lead to depression. Many individuals spend so much time on social media to receive news, play games, chat with friends which interfere their daily lives. They constantly check up on others opinions and posts to eliminate the fear of missing out. They often tend to compare their lifestyle with what other people exaggerate on social media.

Nowadays, the use of text messaging and social media messages have become a common occurrence and this has reduced the human interaction. The interaction with others has become effortless because people have isolated themselves behind online identities. The society use abbreviations and emojis to express their emotions eliminating direct communication. This scenario will isolate human beings more than ever, hence dismissing the need to socialize.

Guilt & Stress

Simply, modern society people are overwhelmed with guilt due to lack of human association and the stress caused by day to day activities. So we are eventually fond of interacting because we need mental stimuli. We are inclined to use all the kinds of social media, as stated before, such as Facebook, Twitter, Instagram, YouTube, etc. to fulfill it. But in contrary to all these remedies, people tend to feel lonely and isolated due to lack of empathy. Even if we socialize more on social media, we feel more isolated, and these natural urges persuade us to seek connections. Further, we seek validations on other personal matters from other people. If you make a great painting, and you love it, that’s great. If other people love it too, that’s better. Simply we, humans are attention seekers. Well, what if we state, those devices mimicking realistic personal responses are now taking the place of their real human counterparts.

“Generally, when people feel socially excluded, they seek out other ways of compensating, such as exaggerating their number of Facebook friends or engaging in pro-social behaviors to find other means of interactions with people. When you introduce a humanlike product, those compensatory behaviors stop,” says Jenny Olson, study co-author and marketing professor at the University of Kansas, in a statement.

Personal Assistants

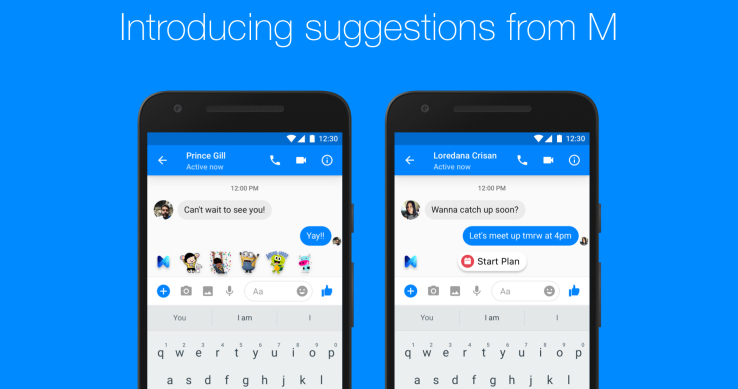

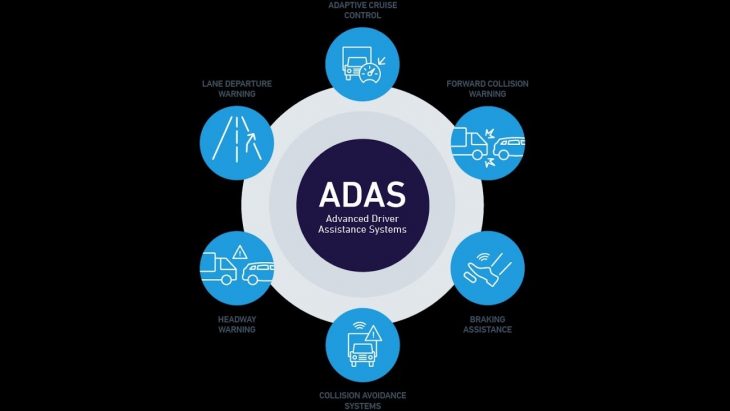

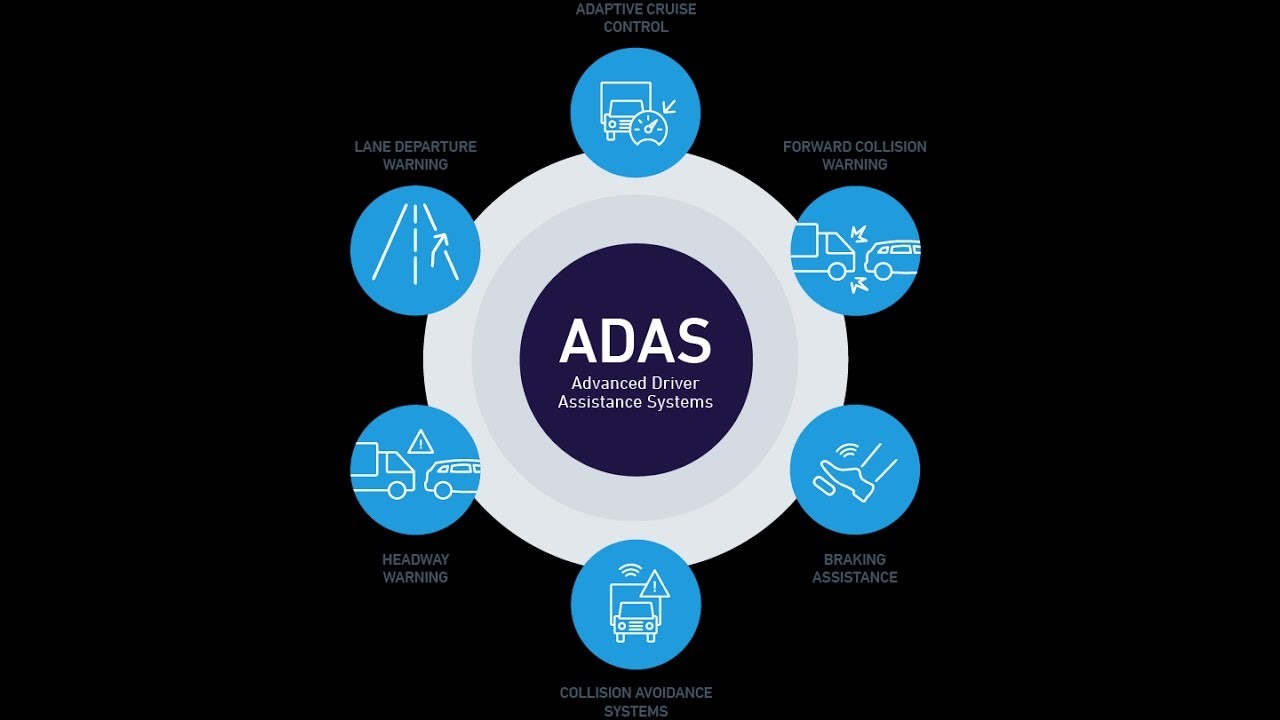

This is where the Intelligent personal assistants play an important part. Intelligent personal assistants, also called virtual assistants, are software applications or devices that can assist the user with tasks, planning, or retrieving information, similar to a human assistant. This technology usually fully utilizes the sensors present on the device it runs on for contextual awareness. In this way it can deliver more accurate and relevant information. Modern intelligent personal assistants are able to learn based on previous input so they can offer better, more personalized results to the user. Personal assistants that are for the general consumer are, for example, the Apple Siri, Microsoft Cortana, or Amazon Echo.

“Alexa isn’t a perfect replacement for your friend Alexis. But the virtual assistant can affect your social needs.” says lead author and marketing professor at DePaul University, James Mourey, in a statement.

Harper’s magazine ran part of a conversation with Chinese journalist Chen Zhiyan and three chatbots: Chicken Little, Little Ice, and Little Knoll. The conversation took place on instant messaging and calling app, WeChat. What Zhiyan determined using these three chatbots was, that many people were confiding over their concerns, and they had felt lonely, mostly at night where only these chatbots can accompany them.

Despite the growing trend of finding solace in the virtual arms of a machine, it is important to nurture real-life relationships and develop self-awareness. If we spend more and more time snuggled up with the technology, we have to question ourselves repeatedly, ‘Are these devices bringing us closer together or further apart?’. Though the answer might depend on which decade you were born in, the result might be the same.

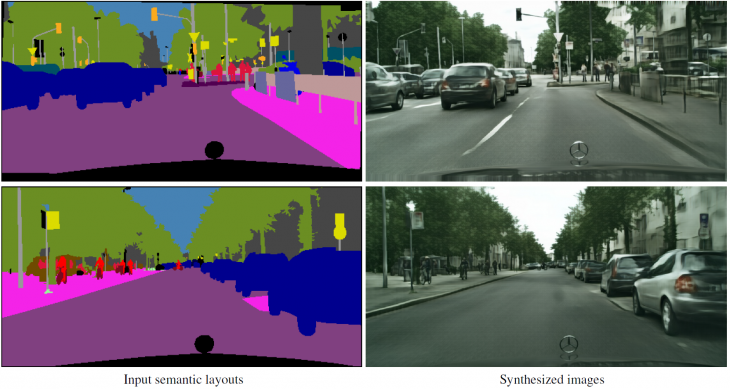

Google maps කියන්නේ එදිනෙදා ජීවිතය smart වෙන්න යොදා ගන්න පුළුවන් Google විසින් නොමිලේ ලබාදෙන සේවාවක්. යන එන මග හොයාගන්න, traffic කොයිවගේද බලන්න වගේම අපිට වැදගත් වෙන ස්ථාන බලන්නත් Google maps යොදා ගන්න පුළුවන්. මේ වැඩිවෙන ඉල්ලුමත් එක්ක හොඳ සේවාවක් ලබාදෙන්න Google සමාගම විසින් විවිධ පර්යේෂණ වගේම අත්හදාබැලීම් කරනවා. Street View කියන සේවාව integrate කරල Google Maps update වෙනවා හැමදාම වගේ. Street View කාර් රථ මගින් බිලියන ගණන් ඡායාරුප රැස් කරනව. මේ images manually විශ්ලේෂණය කරන එක ප්රයෝගික වෙන්නේ නෑ. මේ සඳහා automated system එකක් හදන එක තමා Google’s Ground Truth කියන කණ්ඩායමට පැවරිලා තියෙන්නෙ.

Google maps කියන්නේ එදිනෙදා ජීවිතය smart වෙන්න යොදා ගන්න පුළුවන් Google විසින් නොමිලේ ලබාදෙන සේවාවක්. යන එන මග හොයාගන්න, traffic කොයිවගේද බලන්න වගේම අපිට වැදගත් වෙන ස්ථාන බලන්නත් Google maps යොදා ගන්න පුළුවන්. මේ වැඩිවෙන ඉල්ලුමත් එක්ක හොඳ සේවාවක් ලබාදෙන්න Google සමාගම විසින් විවිධ පර්යේෂණ වගේම අත්හදාබැලීම් කරනවා. Street View කියන සේවාව integrate කරල Google Maps update වෙනවා හැමදාම වගේ. Street View කාර් රථ මගින් බිලියන ගණන් ඡායාරුප රැස් කරනව. මේ images manually විශ්ලේෂණය කරන එක ප්රයෝගික වෙන්නේ නෑ. මේ සඳහා automated system එකක් හදන එක තමා Google’s Ground Truth කියන කණ්ඩායමට පැවරිලා තියෙන්නෙ.